Moving virtual objects with your mind sounds like science fiction, but underneath the hood, it is really an intensive signal processing and classification problem.

For my Final Year Project, I led a team of four engineers to build an end-to-end Brain-Computer Interface (BCI). The goal was to bypass physical movement entirely and map a user's motor intentions directly to a 3D animated hand in real time. This work was eventually published as a first-author paper at IEEE ICICIS.

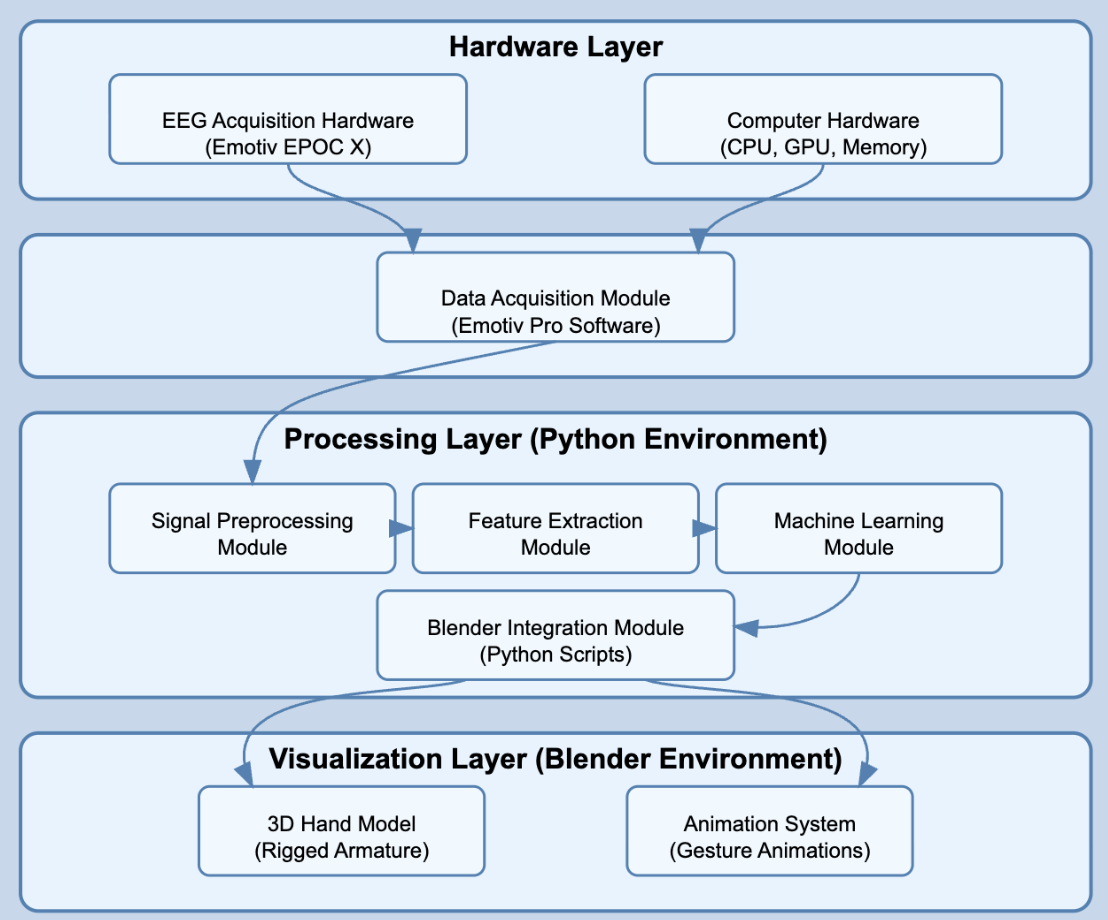

The Hardware & Stack

Tech Stack

- > Hardware: Emotiv EPOC X Headset (256Hz sampling)

- > Processing: Python, Fast Fourier Transforms (FFT), Wavelets

- > Machine Learning: Scikit-learn (KNN)

- > Visualization: Blender Python API & Unity

Cutting Through the Noise

Raw EEG data is incredibly noisy. Every blink, heartbeat, or slight muscle twitch creates an artifact that can drown out the actual brainwaves tied to motor intent. We recorded baseline signals from participants and applied Fast Fourier Transforms (FFT) alongside wavelet transforms to extract clean, frequency-domain features.

Once we isolated the features related to specific hand gesture intentions, we trained a K-Nearest Neighbors (KNN) classifier. The model successfully distinguished between different gesture intentions with an accuracy of 97.63%.

Real-Time 3D Rendering

Classifying the signal was only step one. We wrote a custom Python controller within Blender (using bpy) that listened for live predictions from our classification script. The moment the model predicted a "close fist" or "open hand" intent from the brainwaves, the rigged 3D hand model in Blender executed the animation.

It is an incredible feeling to sit perfectly still, think about moving your hand, and watch a virtual arm on your screen react instantly.