ViziAssist was a team project built by three undergrads during hackathon season, inspired by one simple gap: advanced road safety tech is mostly designed for fully sighted drivers and expensive vehicles. We wanted something practical, affordable, and usable on Indian roads right now.

Instead of adding another screen-heavy ADAS interface, we designed a screenless assistive system. The model detects road obstacles and maps them to peripheral LED signals so the driver gets fast, low-cognitive alerts. Our work was later accepted at CVIP 2024 and published in Springer's CCIS series.

The Hardware Stack

Specs

- > Compute: NVIDIA Jetson Nano

- > Vision: Raspberry Pi Camera Module (CSI)

- > Model: YOLOv7 (Fine-tuned on IDD Dataset)

- > Output: 5-Pin GPIO LED Array

The "Anti-UI" Architecture

The core idea was to keep output intuitive: detect classes like buses, cars, riders, and pedestrians with YOLOv7, then trigger dedicated GPIO LEDs on the Jetson Nano. That design choice made the system usable without requiring users to parse dense visual dashboards.

Deployment & Outcomes

We tested the prototype on live roads in Pune and Chandigarh and iterated through edge-device constraints, camera placement, and alert timing. The project also reached the pre-finale round at KPIT Sparkle (top 100 teams nationally), which gave us strong industry feedback on real-world deployment.

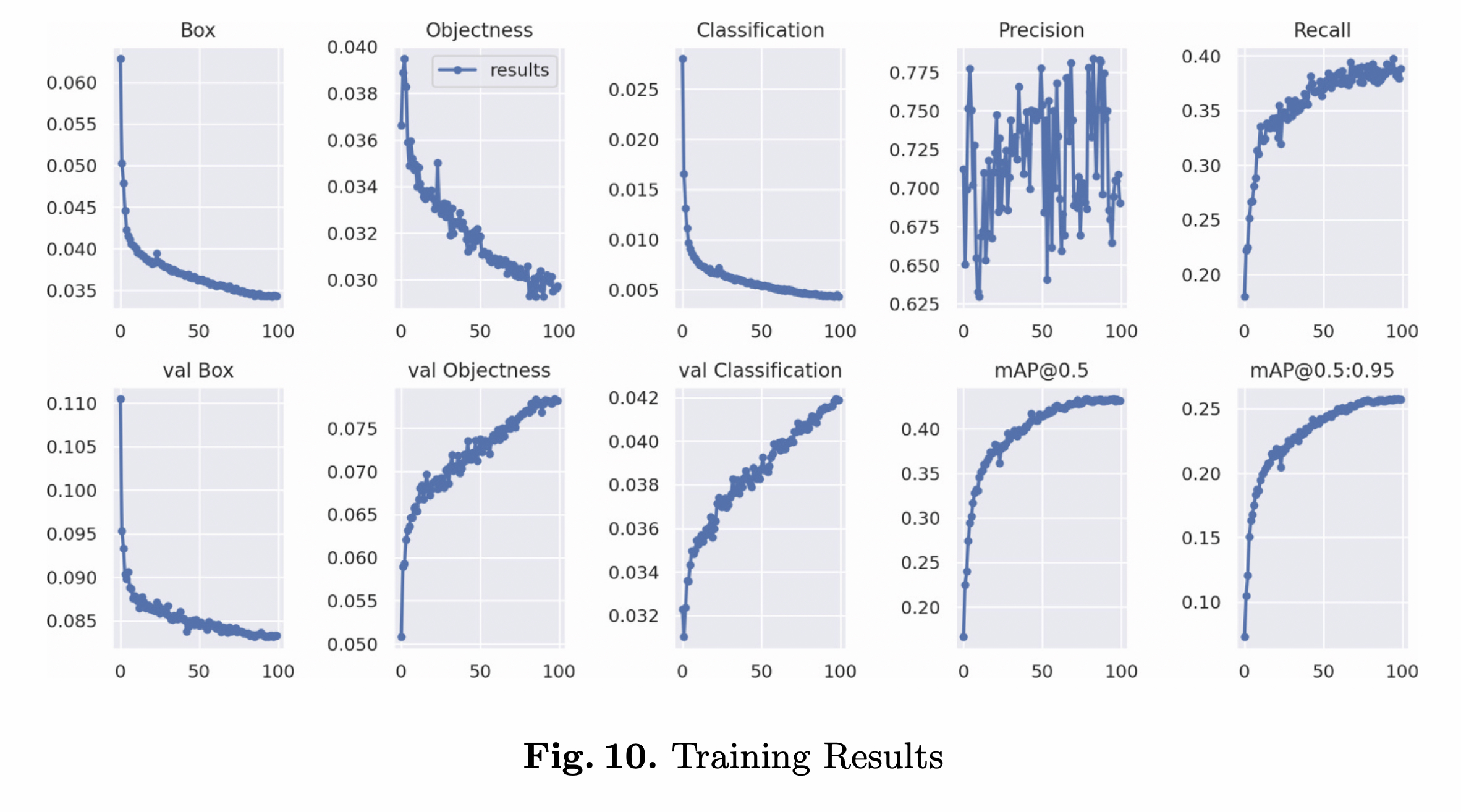

On the modeling side, we achieved mAP@0.5 of 0.681, with strong class precision for buses (0.800) and autorickshaws (0.799). Those results mattered to us because they showed transfer learning could adapt well to India-specific traffic patterns.